The American Trends Panel survey methodology

Overview

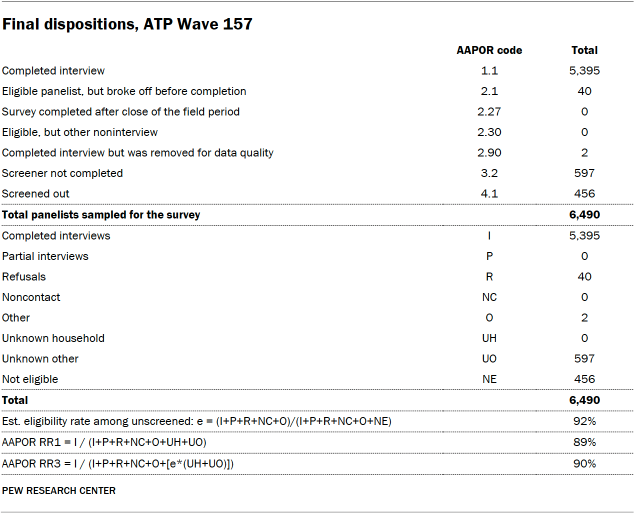

Data in this report comes from Wave 157 of the American Trends Panel (ATP), Pew Research Center’s nationally representative panel of randomly selected U.S. adults. The survey was conducted from Oct. 7 to Oct. 13, 2024, among a sample of ATP members who indicated that they currently work either full or part time for pay. A total of 5,395 panelists responded out of 6,490 who were sampled, for a survey-level response rate of 90% (AAPOR RR3).

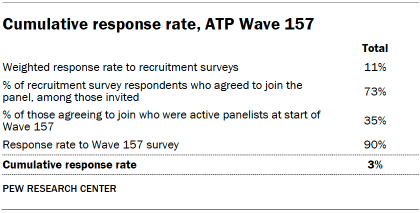

The cumulative response rate accounting for nonresponse to the recruitment surveys and attrition is 3%. The break-off rate among panelists who logged on to the survey and completed at least one item is 1%. The margin of sampling error for the full sample of 6,490 respondents is plus or minus 1.7 percentage points.

SSRS conducted the survey for Pew Research Center via online (n=5,334) and live telephone (n=61) interviewing. Interviews were conducted in both English and Spanish.

To learn more about the ATP, read “About the American Trends Panel.”

Panel recruitment

Since 2018, the ATP has used address-based sampling (ABS) for recruitment. A study cover letter and a pre-incentive are mailed to a stratified, random sample of households selected from the U.S. Postal Service’s Computerized Delivery Sequence File. This Postal Service file has been estimated to cover 90% to 98% of the population.8 Within each sampled household, the adult with the next birthday is selected to participate. Other details of the ABS recruitment protocol have changed over time but are available upon request.9 Prior to 2018, the ATP was recruited using landline and cellphone random-digit-dial surveys administered in English and Spanish.

A national sample of U.S. adults has been recruited to the ATP approximately once per year since 2014. In some years, the recruitment has included additional efforts (known as an “oversample”) to improve the accuracy of data for underrepresented groups. For example, Hispanic adults, Black adults and Asian adults were oversampled in 2019, 2022 and 2023, respectively.

Sample design

The overall target population for this survey was noninstitutionalized people ages 18 and older living in the United States, who work for pay either full time or part time. All active panel members who reported working either full or part-time for pay in ATP Wave 150 (fielded in July 2024), were invited to participate in this wave. Respondents were again asked about their current employment situation at the beginning of this survey, and those who indicated that they were not currently working for pay were screened out.

Questionnaire development and testing

The questionnaire was developed by Pew Research Center in consultation with SSRS. The web program used for online respondents was rigorously tested on both PC and mobile devices by the SSRS project team and Pew Research Center researchers. The SSRS project team also populated test data that was analyzed in SPSS to ensure the logic and randomizations were working as intended before launching the survey.

Incentives

All respondents were offered a post-paid incentive for their participation. Respondents could choose to receive the post-paid incentive in the form of a check or gift code to Amazon.com, Target.com or Walmart.com. Incentive amounts ranged from $5 to $20 depending on whether the respondent belongs to a part of the population that is harder or easier to reach. Differential incentive amounts were designed to increase panel survey participation among groups that traditionally have low survey response propensities.

Data collection protocol

The data collection field period for this survey was Oct. 7 to Oct. 13, 2024. Surveys were conducted via self-administered web survey or by live telephone interviewing.

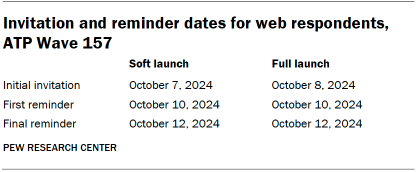

For panelists who take surveys online:10 Postcard notifications were mailed to a subset on Oct. 7.11 Survey invitations were sent out in two separate launches: soft launch and full launch. Sixty panelists were included in the soft launch, which began with an initial invitation sent on Oct. 7. All remaining English- and Spanish-speaking sampled online panelists were included in the full launch and were sent an invitation on Oct. 8.

Panelists participating online were sent an email invitation and up to two email reminders if they did not respond to the survey. ATP panelists who consented to SMS messages were sent an SMS invitation with a link to the survey and up to two SMS reminders.

For panelists who take surveys over the phone with a live interviewer: Prenotification postcards were mailed on Oct. 4. Soft launch took place on Oct. 7 and involved dialing until a total of three interviews had been completed. All remaining English- and Spanish-speaking sampled phone panelists’ numbers were dialed throughout the remaining field period. Panelists who take surveys via phone can receive up to six calls from trained SSRS interviewers.

Data quality checks

To ensure high-quality data, Center researchers performed data quality checks to identify any respondents showing patterns of satisficing. This includes checking for whether respondents left questions blank at very high rates or always selected the first or last answer presented. As a result of this checking, two ATP respondents were removed from the survey dataset prior to weighting and analysis.

Weighting

The ATP data is weighted in a process that accounts for multiple stages of sampling and nonresponse that occur at different points in the panel survey process. First, each panelist begins with a base weight that reflects their probability of recruitment into the panel. Weighting parameters were based on the full set of ATP members who were potentially eligible for inclusion in the sample prior to any screening. First, the base weights for all ATP members who responded to the 2024 Annual Profile Survey (Wave 150) were calibrated to align with the population benchmarks in the accompanying table to create a full-panel weight.

The full-panel weight for panelists who completed the survey was calibrated to align with the distribution for the entire sample (including those who did not respond to Wave 157) on the following dimensions: age, gender, education, race/ethnicity, years lived in the U.S., volunteerism, voter registration, frequency of internet use, religion, party affiliation, census region, and metropolitan status. Additionally, respondents’ employment status(whether they work full or part time for pay) as reported in Wave 157 was weighted to match the distribution from Wave 150. These weights were then trimmed at the 1st and 99th percentiles to reduce the loss in precision stemming from variance in the weights. Sampling errors and tests of statistical significance take into account the effect of weighting.

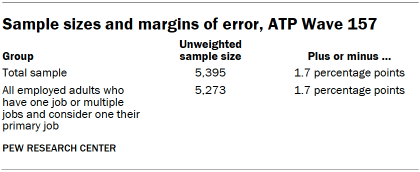

The following table shows the unweighted sample sizes and the error attributable to sampling that would be expected at the 95% level of confidence for different groups in the survey.

Sample sizes and sampling errors for other subgroups are available upon request. In addition to sampling error, one should bear in mind that question wording and practical difficulties in conducting surveys can introduce error or bias into the findings of opinion polls.

Dispositions and response rates

A note about the Asian adult sample

This survey includes a total sample size of 389 employed Asian adults. The sample primarily includes English-speaking Asian adults and, therefore, may not be representative of the overall Asian adult population. Despite this limitation, it is important to report the views of Asian adults on the topics in this study. As always, Asian adults’ responses are incorporated into the general population figures throughout this report.

How family income tiers are calculated

Family income data reported in this study is adjusted for household size and cost-of-living differences by geography. Panelists then are assigned to income tiers that are based on the median adjusted family income of all American Trends Panel members. The process uses the following steps:

- First, panelists are assigned to the midpoint of the income range they selected in a family income question that was measured on either the most recent annual profile survey or, for newly recruited panelists, their recruitment survey. This provides an approximate income value that can be used in calculations for the adjustment.

- Next, these income values are adjusted for the cost of living in the geographic area where the panelist lives. This is calculated using price indexes published by the U.S. Bureau of Economic Analysis. These indexes, known as Regional Price Parities (RPP), compare the prices of goods and services across all U.S. metropolitan statistical areas as well as non-metro areas with the national average prices for the same goods and services. The most recent available data at the time of the annual profile survey is from 2022. Those who fall outside of metropolitan statistical areas are assigned the overall RPP for their state’s non-metropolitan area.

- Family incomes are further adjusted for the number of people in a household using the methodology from Pew Research Center’s previous work on the American middle class. This is done because a four-person household with an income of say, $50,000, faces a tighter budget constraint than a two-person household with the same income.

- Panelists are then assigned an income tier. “Middle-income” adults are in families with adjusted family incomes that are between two-thirds and double the median adjusted family income for the full ATP at the time of the most recent annual profile survey. The median adjusted family income for the panel is roughly $74,100. Using this median income, the middle-income range is about $49,400 to $148,200. Lower-income families have adjusted incomes less than $49,400 and upper-income families have adjusted incomes greater than $148,200 (all figures expressed in 2023 dollars and scaled to a household size of three). If a panelist did not provide their income and/or their household size, they are assigned “no answer” in the income tier variable.

Two examples of how a given area’s cost-of-living adjustment was calculated are as follows: the Pine Bluff metropolitan area in Arkansas is a relatively inexpensive area, with a price level that is 19.1% less than the national average. The San Francisco-Oakland-Berkeley metropolitan area in California is one of the most expensive areas, with a price level that is 17.9% higher than the national average. Income in the sample is adjusted to make up for this difference. As a result, a family with an income of $40,400 in the Pine Bluff area is as well off financially as a family of the same size with an income of $58,900 in San Francisco.

Current Population Survey methodology

Most of the analysis used is based on the Current Population Survey (CPS) monthly files. Administered jointly by the U.S. Census Bureau and the Bureau of Labor Statistics, the CPS is a monthly survey of approximately 60,000 occupied households that typically interviews about 50,000 households. It is the source of the nation’s official statistics on unemployment and is explicitly designed to survey the labor force. It is representative of the civilian noninstitutionalized population.

The CPS microdata used in this report is the Integrated Public Use Microdata Series (IPUMS), provided by the University of Minnesota. The IPUMS assigns uniform codes, to the extent possible, to data collected in the CPS over the years. Read more information about IPUMS, including variable definition and sampling error.

Employee tenure data is collected every two years as part of a supplement to the CPS.

Earnings of full-time, full-year workers are based on the CPS Annual Social and Economic Supplement (ASEC), collected every March. Following U.S. Census Bureau practice, earnings are adjusted for inflation using the Chained Consumer Price Index for all Urban Consumers (C-CPI-U). The Census Bureau generated entropy balance weights for the 2020 and 2021 ASEC to account for nonrandom nonresponse. Our analysis used these weights.

The analyses of multiple job-holding, industrial change, professional certificates and the changing demography of the workforce are based on information collected in the basic monthly CPS files.

Survey of Income and Program Participation methodology

The analysis of how workers are paid uses information collected in the Census Bureau’s 2023 Survey of Income and Program Participation (SIPP). It is a nationally representative annual survey that focuses on the income of U.S. households and their participation in government programs. It also asks respondents about their employment during the preceding calendar year, including earnings from commissions, tips and bonus pay.

Survey of Household Economics and Decisionmaking methodology

Finally, workers’ assessment of how much autonomy they have on their jobs is derived from the 2023 Survey of Household Economics and Decisionmaking (SHED). The Federal Reserve has fielded the SHED annually in the fourth quarter of each year since 2013. It is perhaps best known for its assessment of adults overall financial well-being but includes batteries of questions on a number of other topics, including employment. The SHED sample is representative of the civilian, noninstitutionalized adult population. The 2023 SHED had 11,400 respondents.

© Pew Research Center 2024